A campus is like a small city state – made up of “citizens” with a clearly defined community boundary and a shared system of governance. Unlike most other spatial zones, campuses tend to have highly specialized or mediated functional layouts driven by the purpose and needs of the “governing” body, whether it is a corporate, health or education system. A campus environment benefits from the vastly reduced scale and complexity compared to most cities. While campuses have “tourists” in the form of visitors they do not usually have the complex border issues of nations around topics of immigration and naturalization. Mostly campuses have an added benefit in that they are closed systems where all of the users are generally “known” and have a relationship to the governing institution, whether as employees, patients, students, partners, etc. This relationship includes the reciprocal responsibility of the campus owners to ensure the safety, security and success of all of those inhabitants.

That is not to say that campuses don’t suffer from the same challenges of municipalities or countries. Campus organizations are responsible for infrastructure requirements, fiscal policy and social maintenance as well as community building. Most modern campuses have evolved over time just like cities and nations, which means that they also suffer from aging infrastructure, outmoded functional spaces and shifting socio-cultural movements. It is within this framework that the opportunities for data and technology provide a backbone for new spatial experiences and campus planning possibilities.

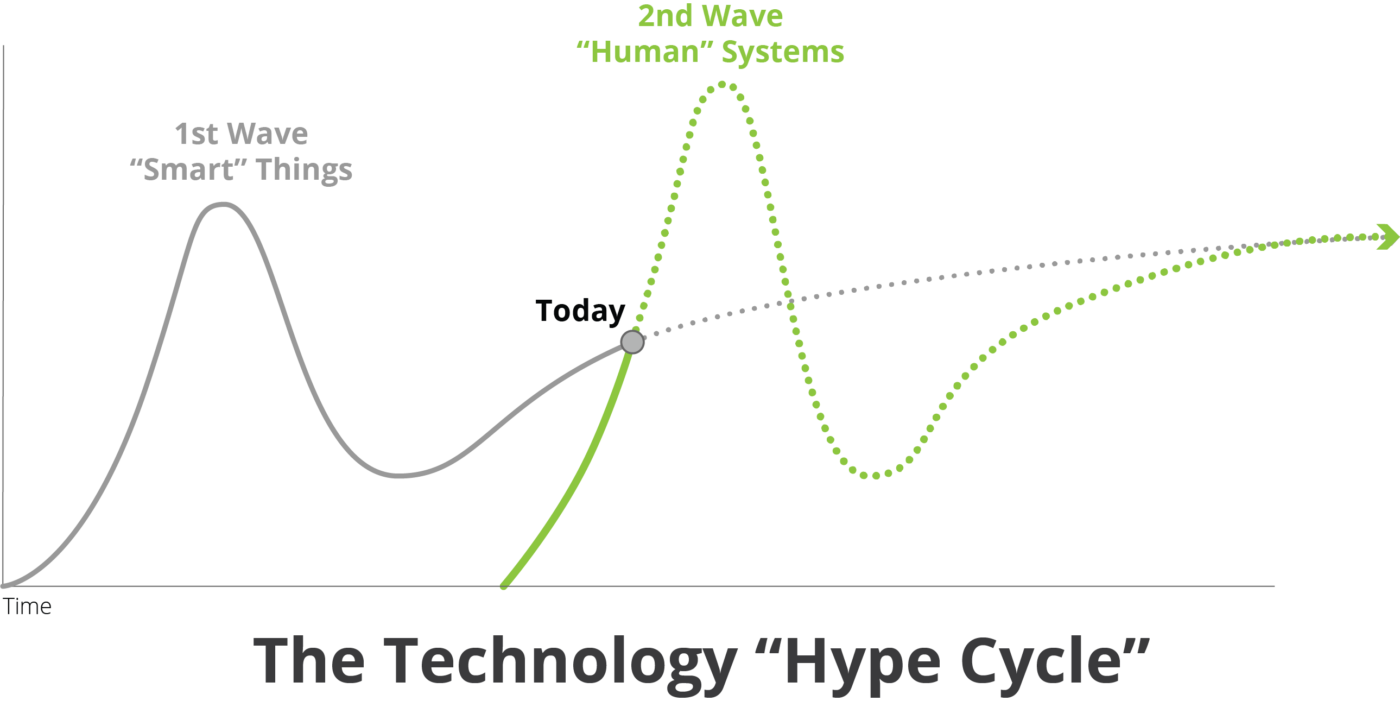

The emergence of "smart" human systems

There are a host of new UX (User Experience)-driven technologies which are emerging which have the direct potential to change how users pattern their behavior in the digital realm, and increasingly in the real, physical world. These new digital-physical UX opportunities will unlock new potential for spatial design, as well as providing powerful platforms for evolving design process. The technologies depicted below are all evolving at the speed of tech startups, which is to say many times faster than we are used to in most industries. There are two main features to note about each of these technology offerings; they are all platform products from technology companies and they are all using Artificial Intelligence to drive or advance their capabilities.

While few of these technologies (other than IoT) are directly spatial, together they comprise a new way of driving digital-physical interactions which could have long-term impact on spatial experiences. Together, they offer unprecedented opportunity to connect users to their environment in newer, smarter ways. At the scale of a campus they have the potential to transform the experience for patients, students, guests and facility administrators. If the “first wave” of embedded IoT technology was geared towards making the components of systems connected (and therefore smarter), the next wave is focused on choreographing these systems to create new experiences in the spatial realm. This takes place in two critical modes:

1) Direct response and control enabled by having embedded networking, intelligence and sensors in the products and control systems themselves, and

2) By using the event and system data and logs generated by these devices to develop analytical models to monitor, analyze and eventually predict usage and behavior across entire ecosystems. Both of these transformations are used to drive changes to interface – the way users interact with system elements.

The focus on interfaces is in part an outcome of the rise of smartphones and ubiquitous computing. It is no longer necessary to take out a laptop or find an internet cafe; large populations throughout the world have networked super-computers in their pockets at all times. That continuous local compute has changed the way people interact with goods and services in fundamental ways. It is possible to shop and check out without taking out a credit card or speaking to a check-out person. When traveling around the city (local or foreign) people have the choice between looking up real-time continuous directions or just hailing a ride to take them where they need to go. In either case the user doesn’t need to describe or indicate where they are located, just where they want to go. In many of these examples the user is inhabiting an infinite virtual space through an interface which eliminates distance, supplants locale and simultaneously connects and disconnects.

The changing spatial paradigm

The past decade has seen an incredible shift in user expectations when it comes to products and services. When people are used to flexible and powerful app experiences that transform their daily lives, their expectations are raised in all other areas as well. While it is true that meeting these demands in campus spatial environments is more challenging, the expectation that user needs can be addressed more directly and personally still remains.

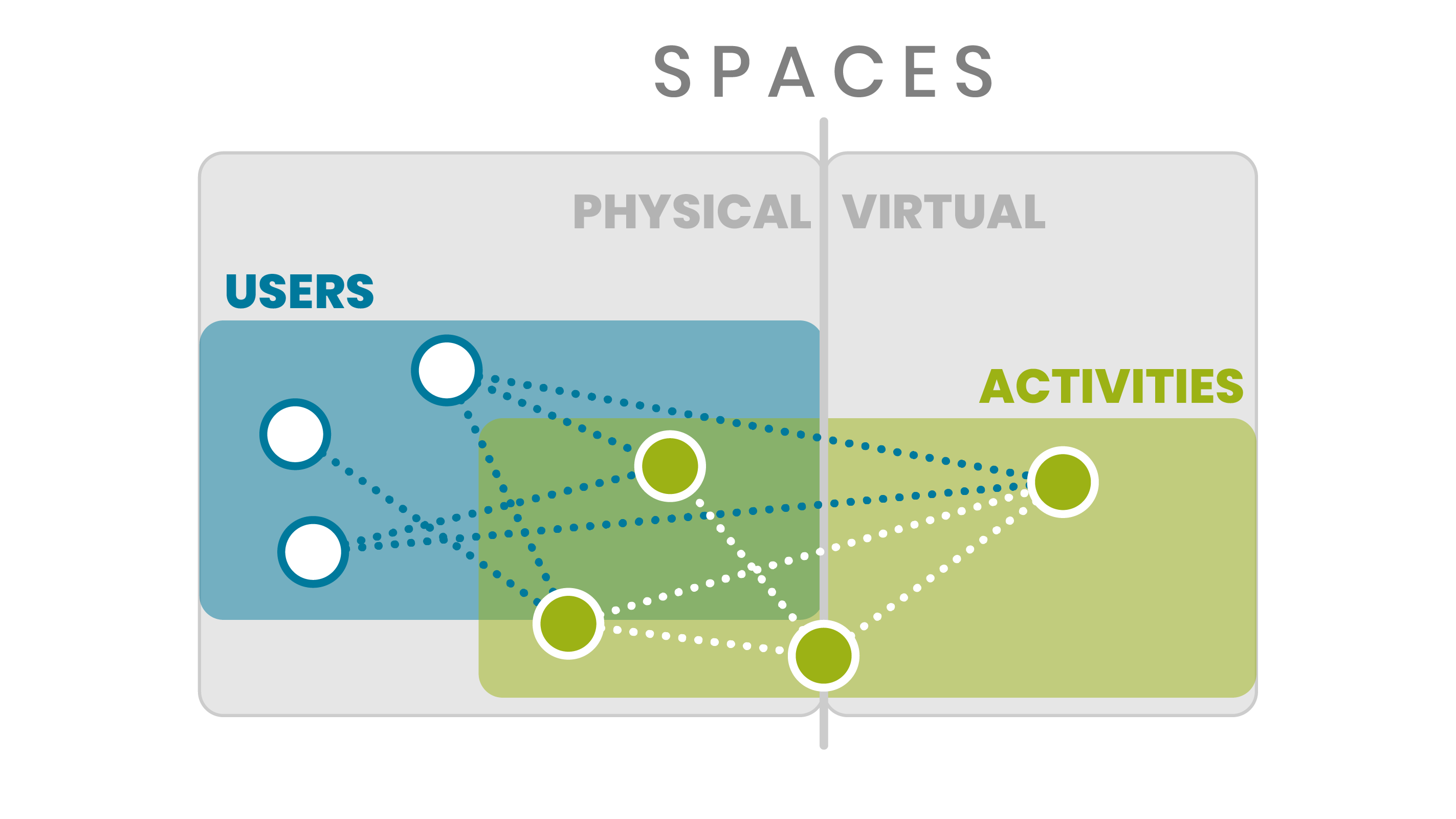

Planning for multiple uses often goes awry and is subject to misinterpretation and miscalculation. Correctly predicting the activities and behaviors of users in a space is the first non-trivial problem. Beyond that, there are the inherent design challenges of environmental comfort and safety. In designing spaces for many activities, the risk is high that no single activity will receive an ideal environment.

All users exist in some physical space regardless of actual presence. They engage in activities alone and together which take place in physical space, virtual or both.

How might interface thinking transform campus design?

On Demand

DIGITAL

Technology is increasingly moving in the direction of on-demand applications and services. This is one of the key features of transformative companies which have disrupted industries with their focus on the interface. The interface allows users to connect to commodities and services so well that it becomes the first and most important layer–one that is always available when needed and fades to the background when the task is finished.

SPATIAL

Aware

DIGITAL

Awareness of context and surrounding is a key feature of the best applications and environments. This can be as simple as shifting the tone and color of a screen to reflect time of day or ambient conditions, to more complex location-aware responses. Why present a menu of options for all conditions when the selections can be pre-filtered based on current context? Better yet, why not automatically trigger the correct action?

SPATIAL

Networked

DIGITAL

One of the features that drives the interface of most apps is their always-on network connections. This means that data can be instantly accessed or pushed to the device to enable interactions. Even the subtlest UI elements can utilize this potent connectivity to drive experience.

SPATIAL

Targeted

DIGITAL

Most successful applications are highly specific and functional. They focus on streamlining and augmenting the interaction and usability of a specific task or activity. By leveraging that extreme focus, they succeed in transforming the user experience. This is often supported by the combination of contextual data (see Aware) and personal user information.

SPATIAL

Where to apply the concepts

These are only a few examples of how the features of successful application interfaces can be translated to spaces. Just using the four concepts above, we can begin to see how a framework for a spatial interface might come together. Networked and Aware concepts are closely linked as together they enable spaces to track conditions and respond based on contextual understanding of time, environment, and users the same way a mobile application interface can connect to data sources and device sensors. On-Demand and Targeted are also a natural pairing. When spaces serve multiple purposes for different users at the same time, each use should be carefully curated and tailored to the user. Linking apps and spaces can allow for the same kind of customized user experiences by augmenting spatial experience with a rich digital layer. Spatial experience can be defined by both the physical characteristics of a space as well as the functional; intelligent way-finding is one example of how the experience of a space could be tailored to different users to direct them to spaces suited to their exact needs.

When it comes to embedding new technology into the built environment, the first and foremost challenge is usually one of policy. In order to bring new developments into the domain of a city or country, there are usually significant hurdles to overcome just in identifying who will take ownership and responsibility over the application and development of that technology. The problem is usually compounded due to inherent tension between government and population when it comes to issues of information and surveillance. Beyond that lies the challenge in defining narrow use cases for the technology in the face of the innumerable complexities and unique conditions of urban environments. Finally, there is the significant breadth of challenges facing most cities with limited resources to address all problems. The first two problems are almost completely non-issues in a campus environment. The campus administrator has incomparable responsibility and control for almost every area their users inhabit. There is no truly “public” domain on most campuses, just the shared spaces where generally “known” users interact with the institution and each other. And without the complex nuance and demographics of a city, the campus generally has specific requirements and challenges which are easier to identify and potentially address. The latter is aided by the fact that the campus administrator generally controls all layers of physical and digital infrastructure on the campus.

Buildings on campuses are already interfaces. On education campuses buildings are often specialized by typology; residential, athletic, library, class, lab, administration, etc. Depending on scale and spatial inter-relation, these buildings may contain different services and amenities which are also sometimes combined into service buildings. On medical campuses there are usually similar distinctions for different hospitals, specialty centers, ER, education, and so forth. In many ways, the buildings themselves already serve as an interface for the different functions of the host institution. As these buildings gain smart features with the application of connected systems, they can begin to function more responsively. By augmenting this relationship with digital user layers, the campus can begin to extend the personal functionality and customizability of buildings for all users across the day, week, season or even over years. In order to build this capability in the future, the first building blocks required are data. Data can enable new services and capabilities, which in turn can generate new and more valuable user input. If these elements are well balanced, the positive feedback and reinforcement cycle can develop truly responsive environments on the physical and digital plane.

Methods of information and data gathering

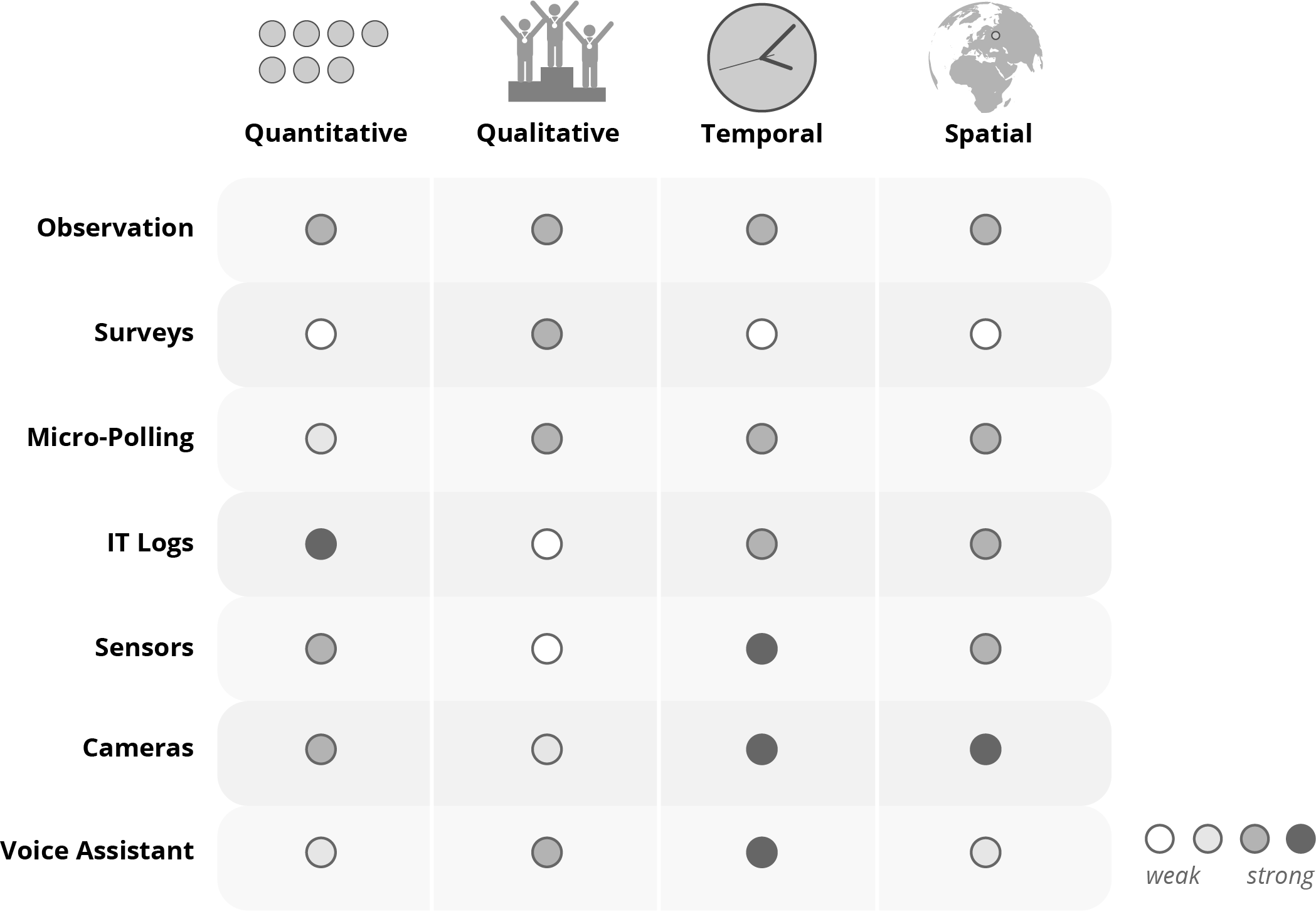

When we consider data about users and spaces there are two main measures to consider; the quantitative and the qualitative. Often observational data is concerned with counting and logging users and activities in time and space. How many people of what kind–“who”–are at a given location–“where”–at a certain time–“when”? Beyond the quantitative and temporal data recorded during observation, there are also the qualitative measures of “what” users are doing and “how” the space and users perform during activities. Then there is the most complex question of all–“why”–which gets to the root of people’s behavior and experience. Observation often requires a degree of expertise or ability which scales with the complexity of the phenomena under study. Almost anyone can count or evaluate basic behaviors; it takes real experts to extract higher-level insights. Sometimes basic observational data is taken as a whole and analyzed by experts to “extract” likely conclusions via induction or deduction.

The other main method used to study spatial interaction is survey. The ideal surveys would use expert sociologists to administer clinical-level questions on individuals selected by sample and record observational data or neuro-physical response to ascribe confidence levels to the individual question results. In reality, most surveys are actually distributed via digital communication channels and have uneven or unpredictable response. With the new ease of deployment, they often take the place of costly observation studies as well, choosing to ask users to self-evaluate and ascribe values to temporal events or behaviors. Intercept surveys or micro-polls begin to overcome these deficiencies by engaging users in brief sessions at the time of activity to log qualitative responses to specific spatial/temporal interactions. However, this re-introduces the human resource challenges of observation-based studies. Even without experts, these methods of data capture are time consuming and expensive. Within this existing framework, there are now new opportunities to employ technology and overcome the previous challenges outlined.

The table below highlights the strengths and weaknesses of different methods. We have added the following items to the methods described above; IT (Information Technology) Logs, Sensors, Cameras and Voice Assistants.

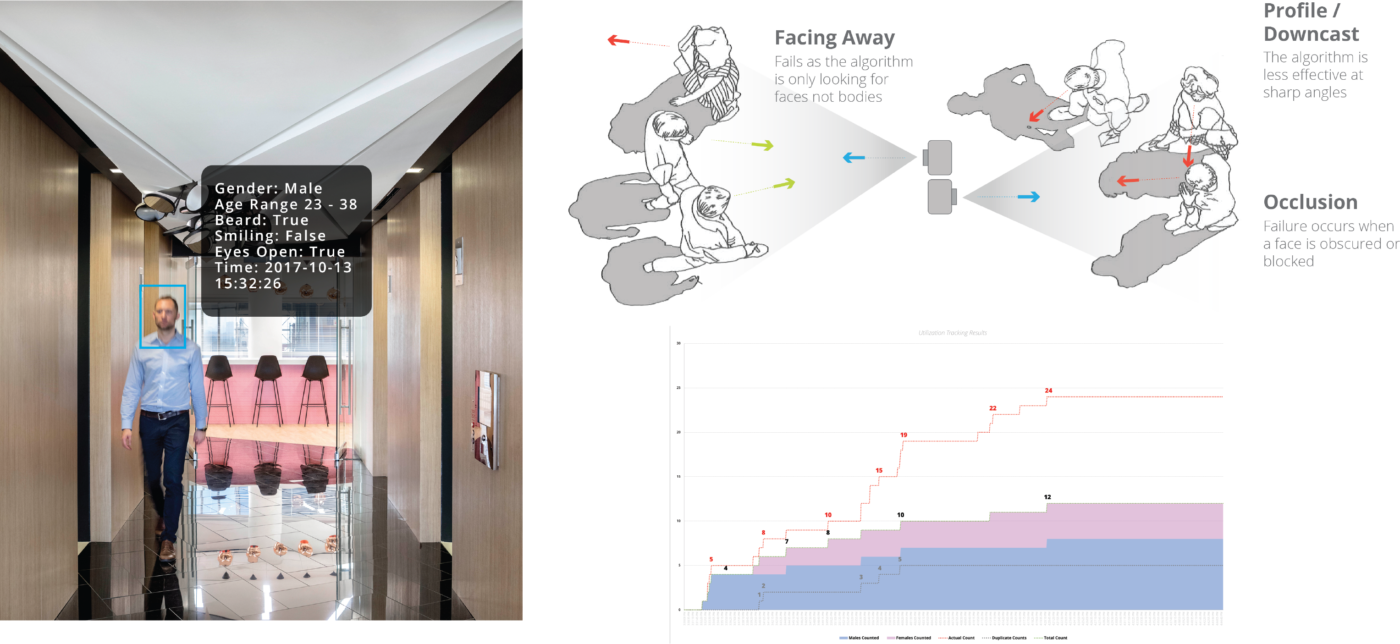

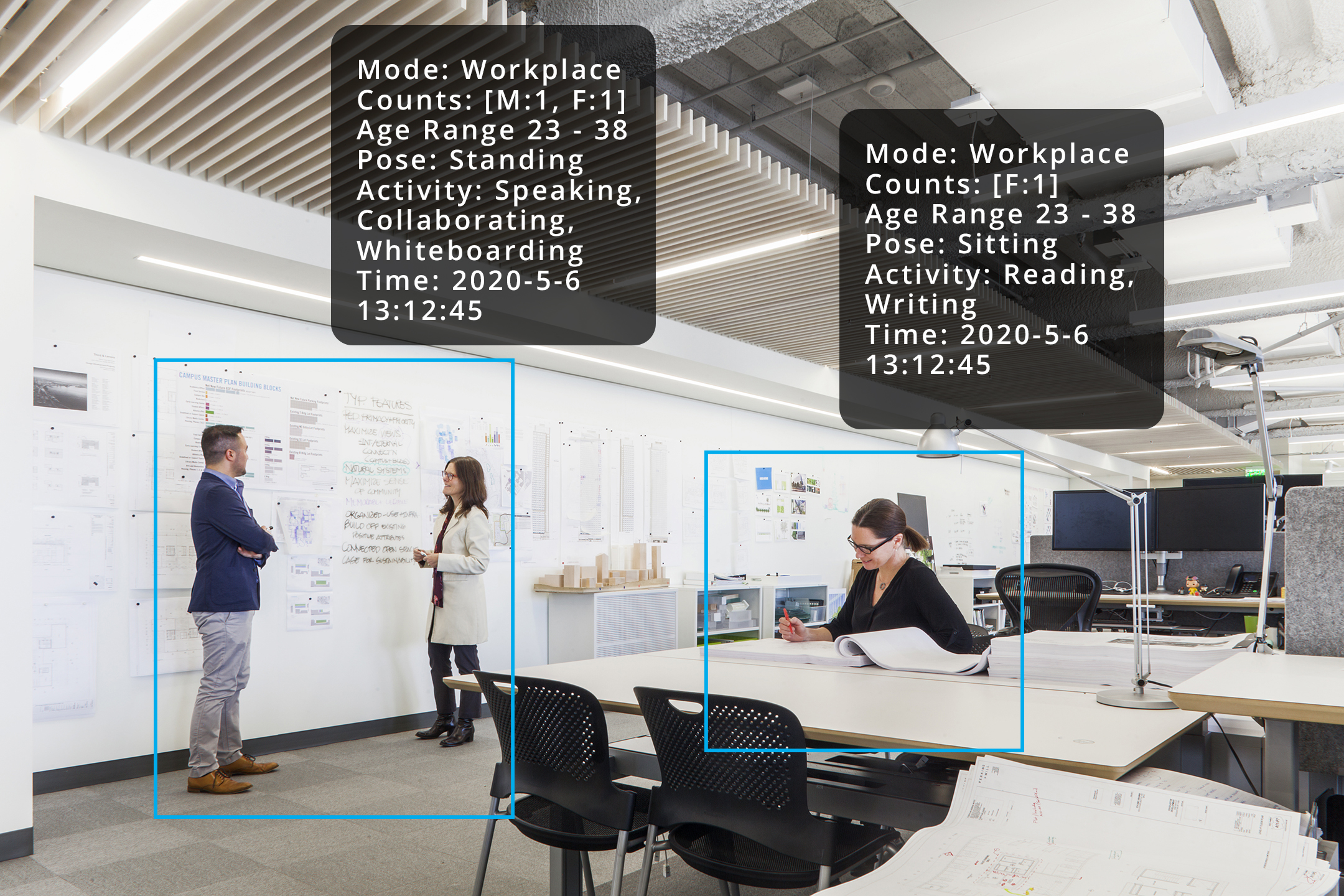

Cameras

Cameras with cloud connectivity can run AI algorithms which are capable of counting people and objects, as well as potential abilities to "read" complex qualitative actions in the field of view.

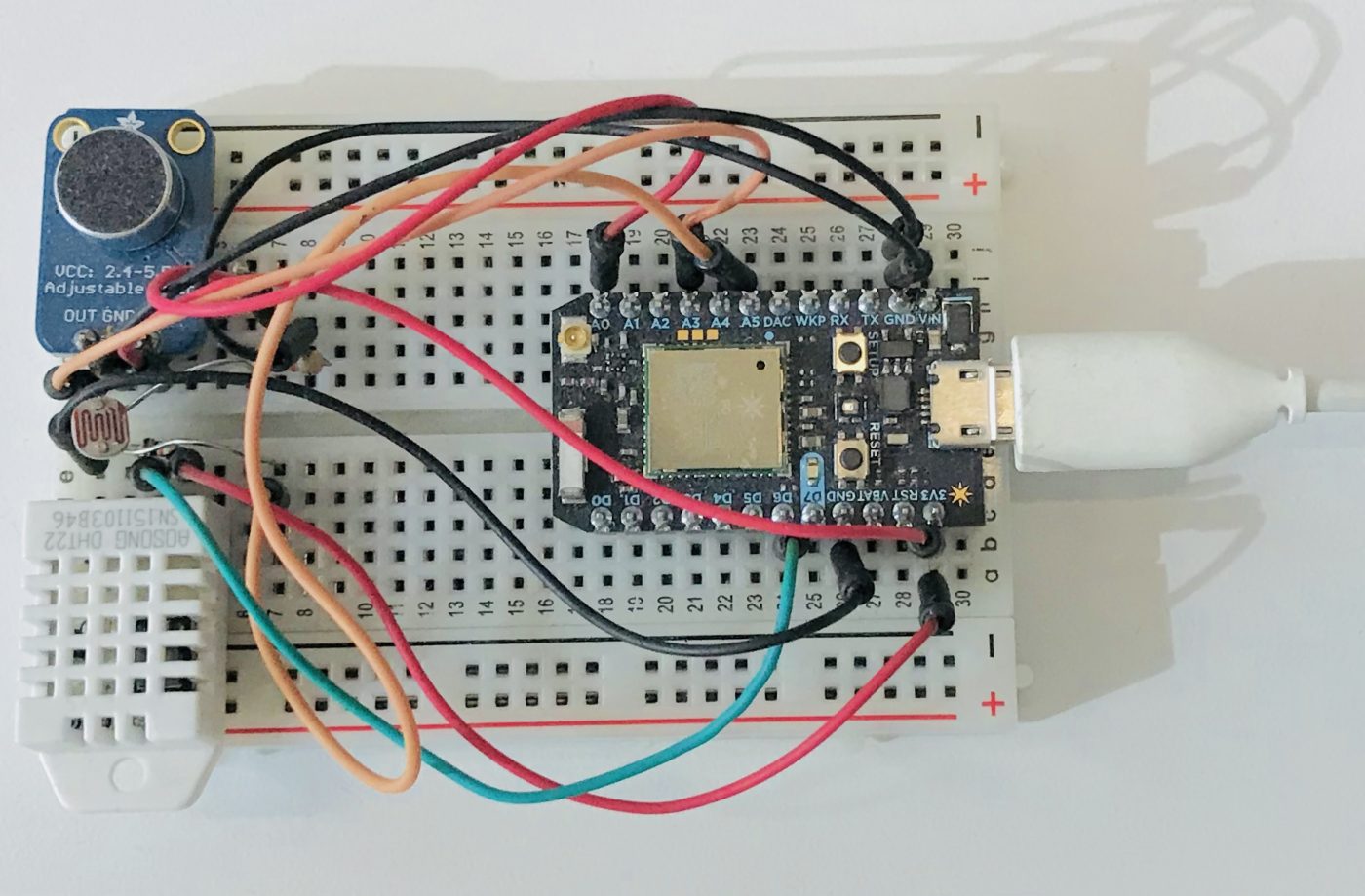

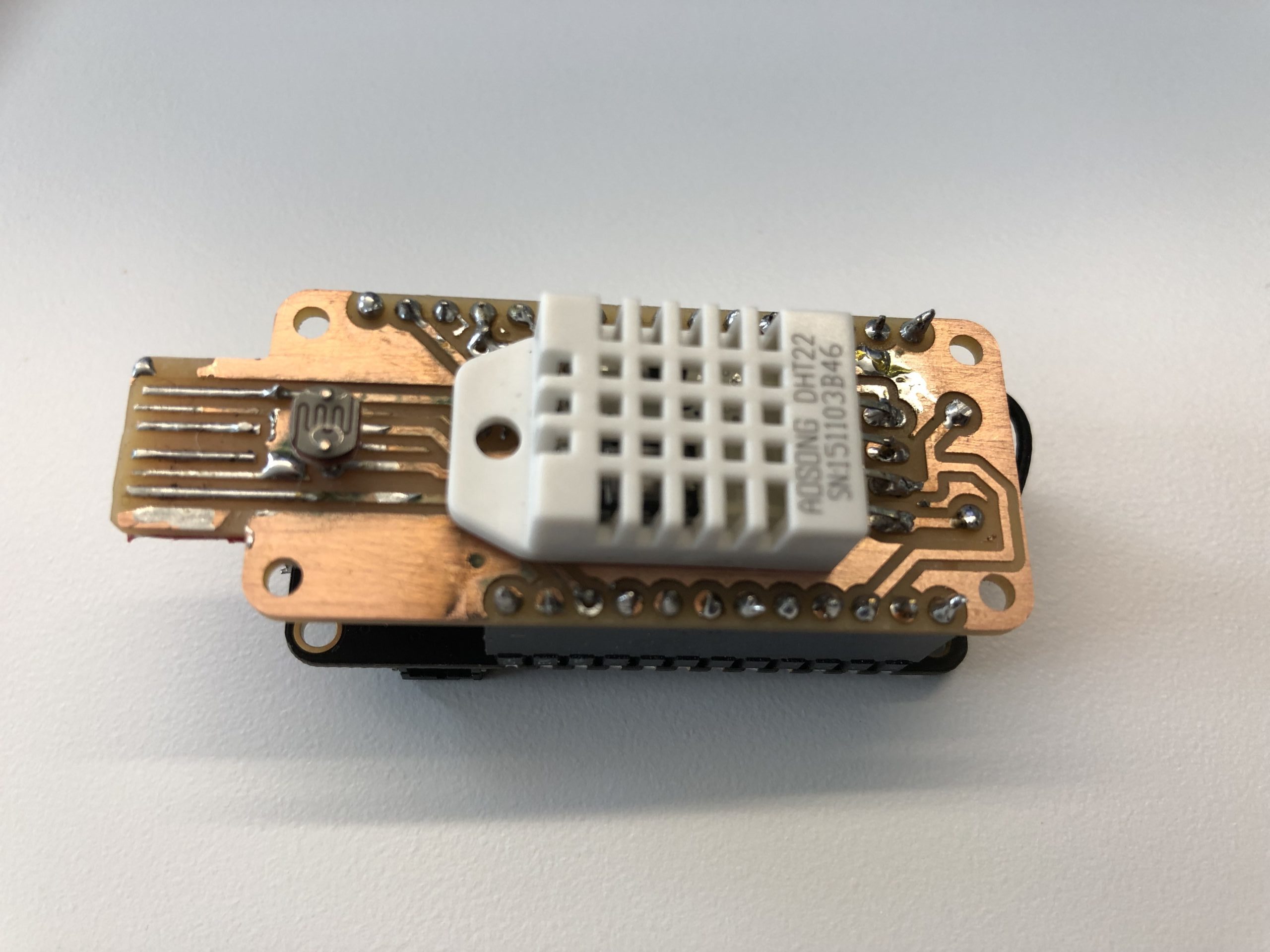

Sensors

Wifi connected sensors can transmit real-time data about environmental conditions (such as light, temperature, sound) and register button presses and other responsive interface interactions.

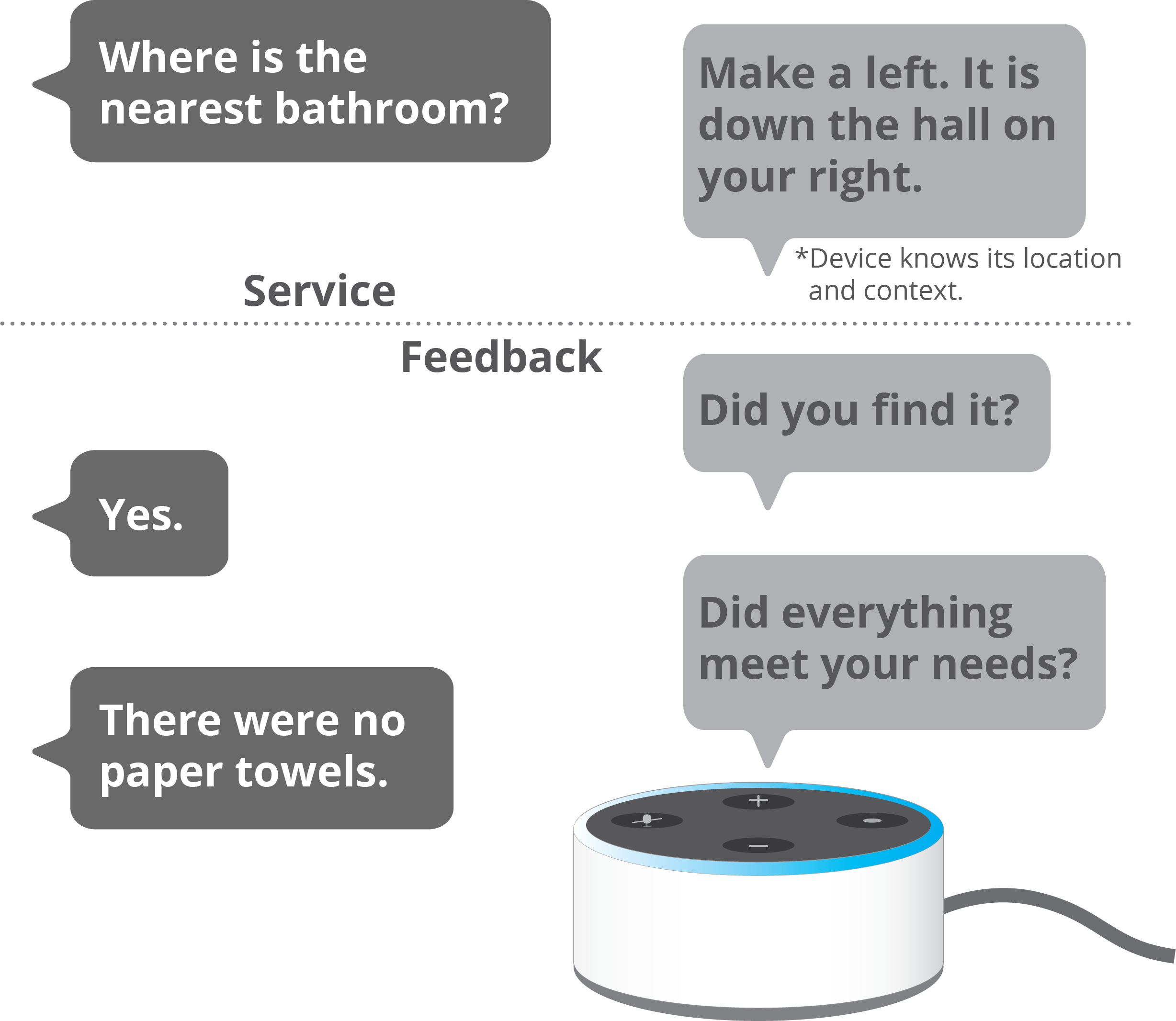

Voice

Voice assistants such as Amazon Alexa or custom chatbots can be used to directly interact with users in space based on pre-defined triggers and without requiring direct human capital for surveying.

IT Logs

Wired and wireless internet connections, enterprise applications, email and VOIP communications and other devices generate timestamped logs of data which can be mined for insight.

Gathering user input without an app

The major downside to mobile apps is getting people to install and use such an app, as well as development concerns around maintenance, privacy and battery consumption. While there is no question that an “app” layer has a meaningful place on a campus (where there is already continuous need for 2-way communication between users and the institution), there are many other layers where technology can be embedded to serve similar functions without undue burden on the user. If these other technologies can offer new digital services to the campus user, there is much more likelihood that that they will adopt and use the institutional app. Increasingly we see the future of this space as “edge” devices where sensors, web connections, compute and user interaction take place on a stand-alone device which isn’t tied to a specific user.

Smarter cameras

Using sensors for continuous feedback

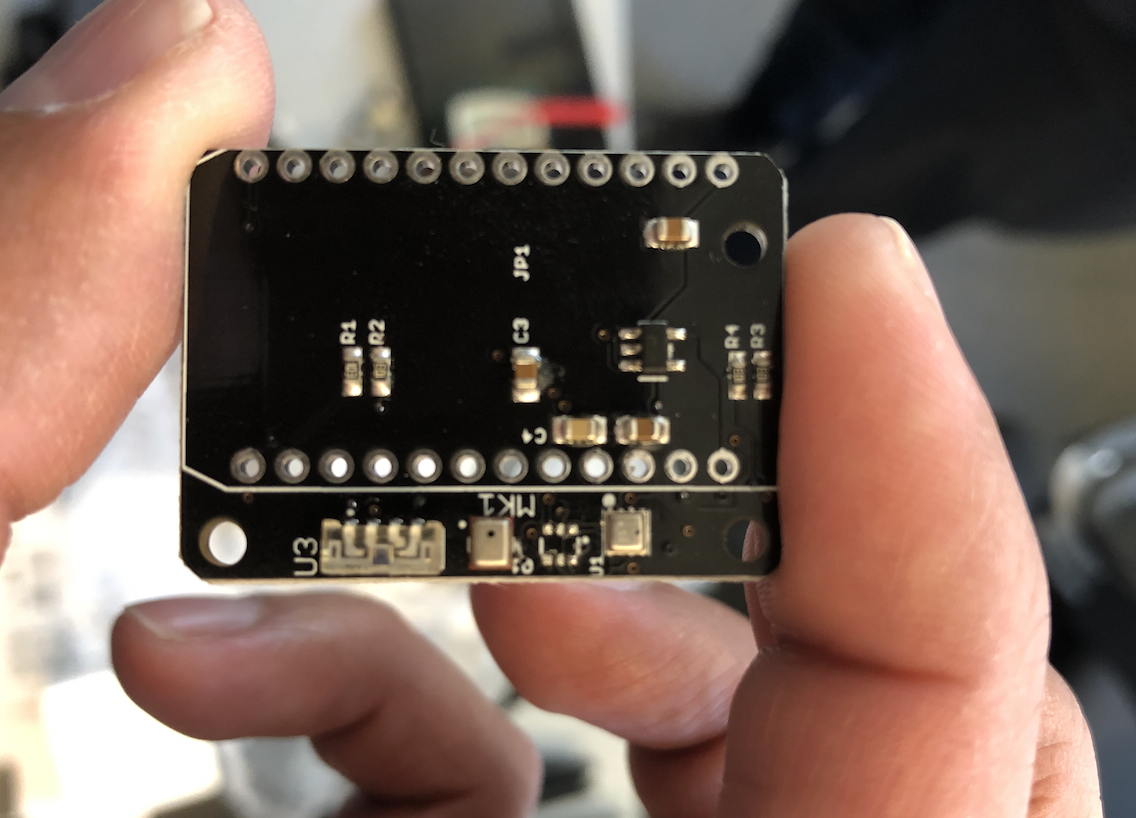

Another way to generate spatial information in real-time is with the deployment of custom sensors. These sensors are increasingly affordable, can stay connected continuously and stream data at up-to-the-second rates. A device like this can be produced at the size of a matchbox car for under $50 dollars even at prototype scale.

While this one connects to wifi, there are versions which can utilize 3G cellular networks to bypass all connectivity issues. All that is required is a 5V USB power supply.

Sensors on the device provide feedback on sound and light levels, temperature and humidity. Realtime streaming data about environmental conditions is crucial for assessing performance and correlating how spatial conditions affect usage and perception of spaces. The best part of using these devices is that they require no surveys or user interaction, yet provide data which grows in value over time and can be triangulated against other data sets

Tapping into natural voice interactions

Recapturing your network costs

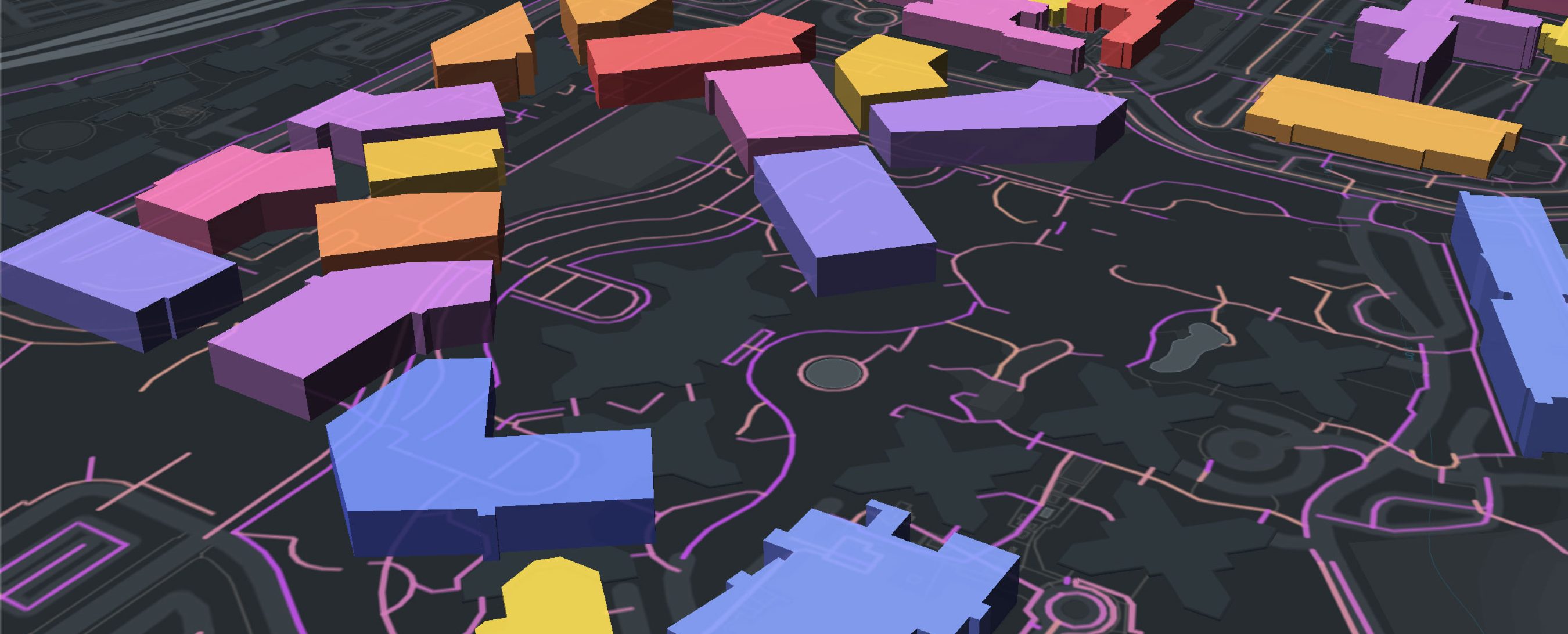

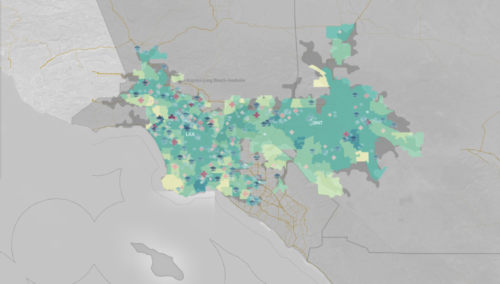

One of the potentially easiest places to begin to mine data on a campus is through IT infrastructure. Modern campuses depend on connectivity, with almost every space on the campus having some form of always-on network connection. Whether the connection is wired or wireless, users throughout most campuses rely on the institutional data network for internet connectivity. Because campuses are generally “closed” systems, everyone who is attached to the institution often makes a secure and recognized connection to the network with unique authentication (an industry best-practice). Unlike a city, it is possible to access both location logs and connection metadata for all users based on when they connected to specific access points. User data is generally encrypted continuously but at a minimum these logs can provide continuous data on gross utilization across a campus over time. Augmenting the log data with specific user features (such as student/faculty/administration, year in school, program of study, etc.) can sometimes be done at the time of logging or afterwards, depending on the system capabilities. Private user information can be secured and removed at all stages and further anonymized by aggregating and scrambling data. Explore a simulated month of user data aggregated to building and floor in the interactive model below.

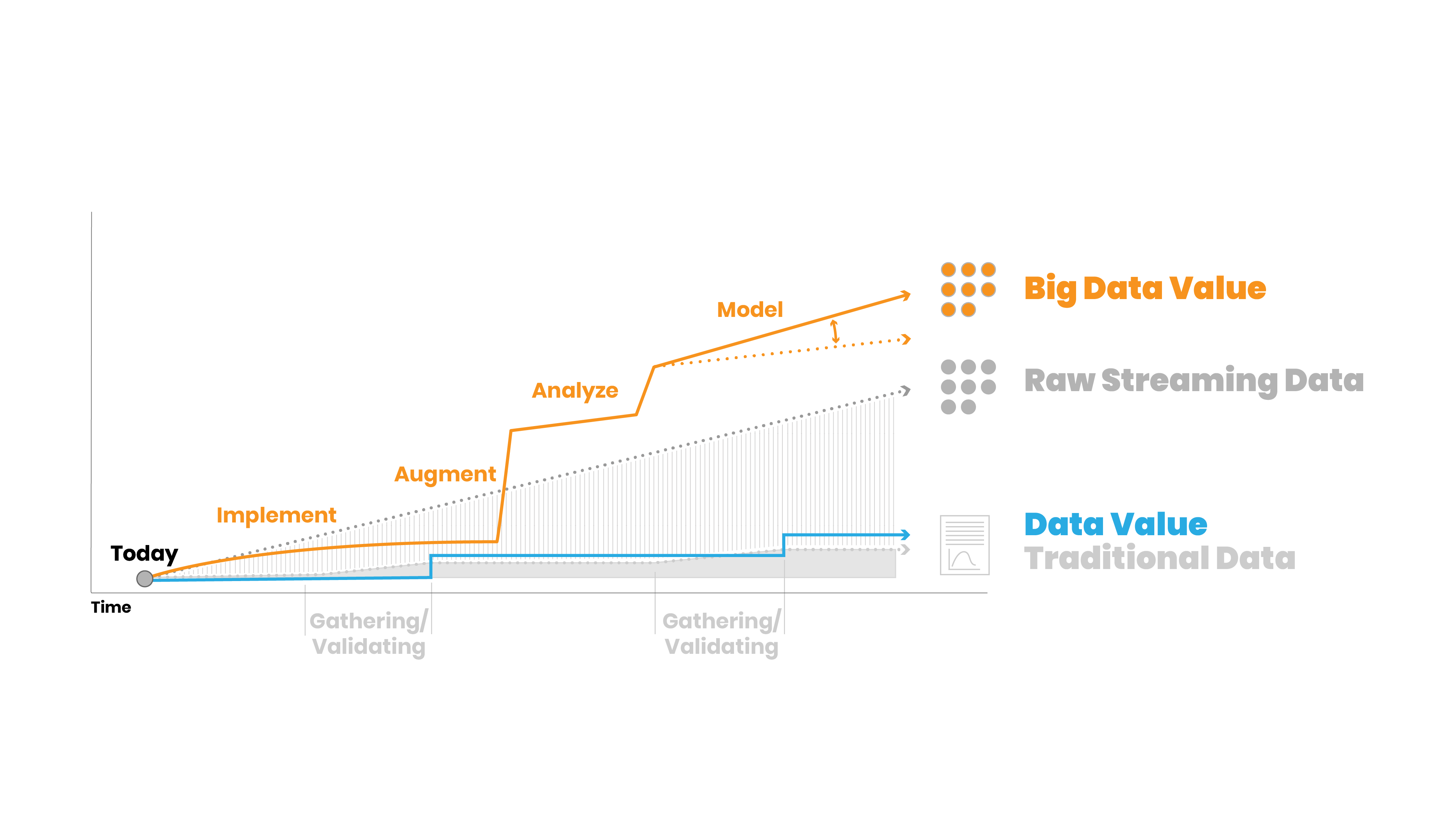

The challenges and opportunities of big data

The systems identified here all have the potential to generate continuous data at the scale of an entire campus worth of buildings, rooms and open spaces. The raw information in these big data sets and streams is continually updating and growing based on automated output from systems and sensors. However, the basic reality remains that most problems–and most data sets–usually only deal with small to medium data. The data may exist beforehand or be generated using traditional methods of gathering and experimentation. The skills and infrastructure needed to store, maintain and secure the underlying data streams are very different than traditional analytic use cases. In particular, there is an enormous volumetric difference between traditional data sets and raw “Big” data sets.

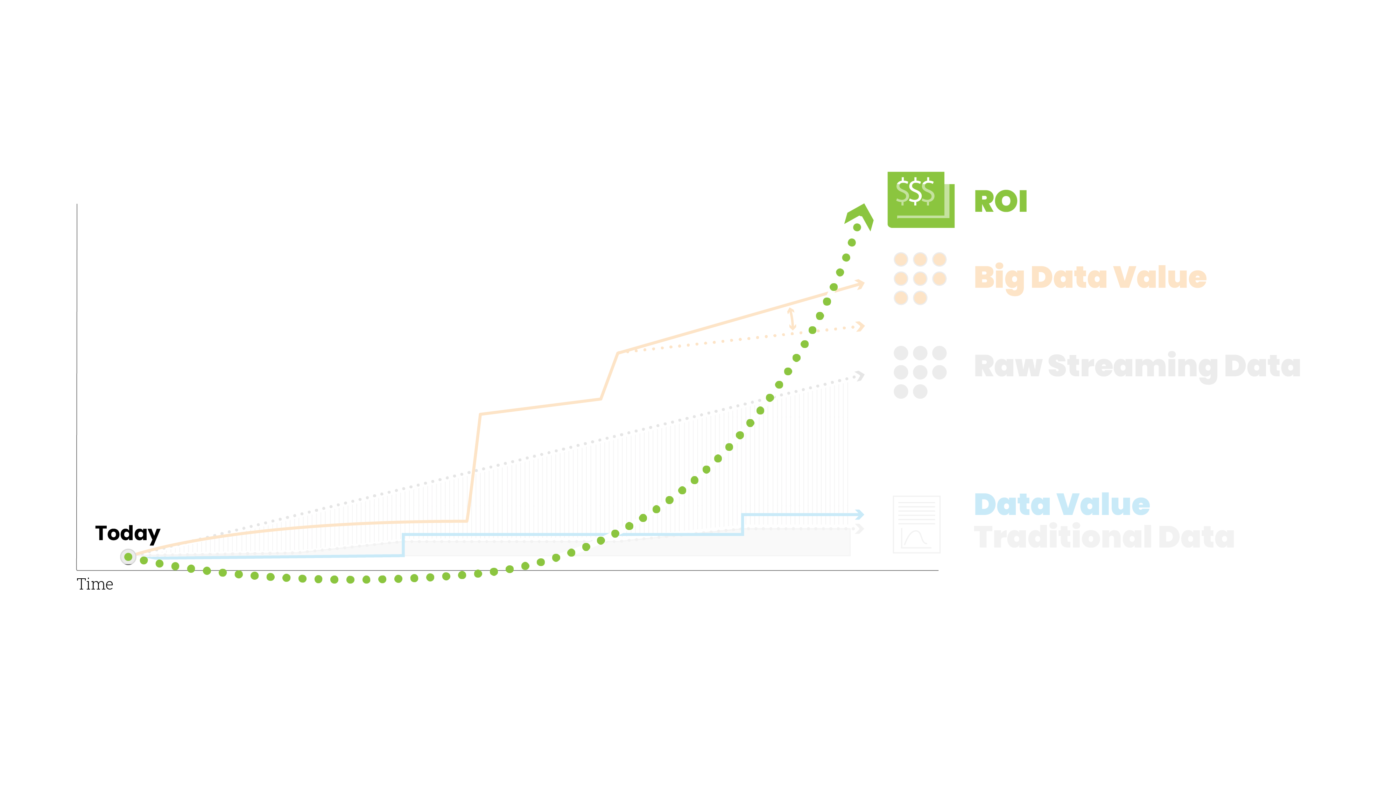

One of the advantages of the traditional methods of data gathering is that the data is often predetermined by the research methodology (survey, study, etc). While there are challenges to that approach, even the raw data itself is often quite reliable as it is validated at many stages. Big data is generally just “raw.” However, we also need to look at the value of the data. The value of traditional data might be seen to increase once it is collected and validated. Since it was gathered/generated for a specific use it holds its value consistently, but it does not increase until the next round of experimentation or collecting. Big data is continuously growing. Even in its most unrefined form there is potential value growth based on its volume and history. Let’s take something like sales data for example. If you are tracking all transactions over time, there is inherent value to that data set as it grows. If you then augment the data with information about product categories or user demographics for purchasers, the entire data set is now much more valuable.

Of course, we also have to consider the challenges to adopting this (or any) radical new approach. In the case of big data, besides the challenges to the traditional ways of thinking, there is the also the cost of adoption. It generally takes a fair amount of effort to put in place or build-out the systems required to generate or harvest big data. And because the data first methodology requires some meaningful amount of data to mine for insights, there is often an extended period of negative Return on Investment (ROI) which you must suffer through patiently. Once you begin to augment and increase the value of the data stream the ROI begins to increase dramatically.

Where to begin

There is a concept emerging in the physical-digital realm known as a “Digital Twin” – a perfect real-time digital spatial model which accurately tracks real-world activity. A campus is likely to be the first location of a true digital twin, even if it is many years away. While many of the technologies and examples demonstrated above have mostly found their application in retail or home environments and as consumer products, the challenges to applying them in un-controlled environments are immense. In a retail setting there may be cameras and apps, but there is also a cash transaction to drive the value for both parties; users will give up some of their personal information and preferences in exchange for discounts or better products. In the public domain and urban setting there is a much higher degree of expected privacy and few shared infrastructures with which to easily log and generate spatial, locational or user data. The home sphere is generally the most intimate and personally tailored and thus provides the best glimpse of the future.

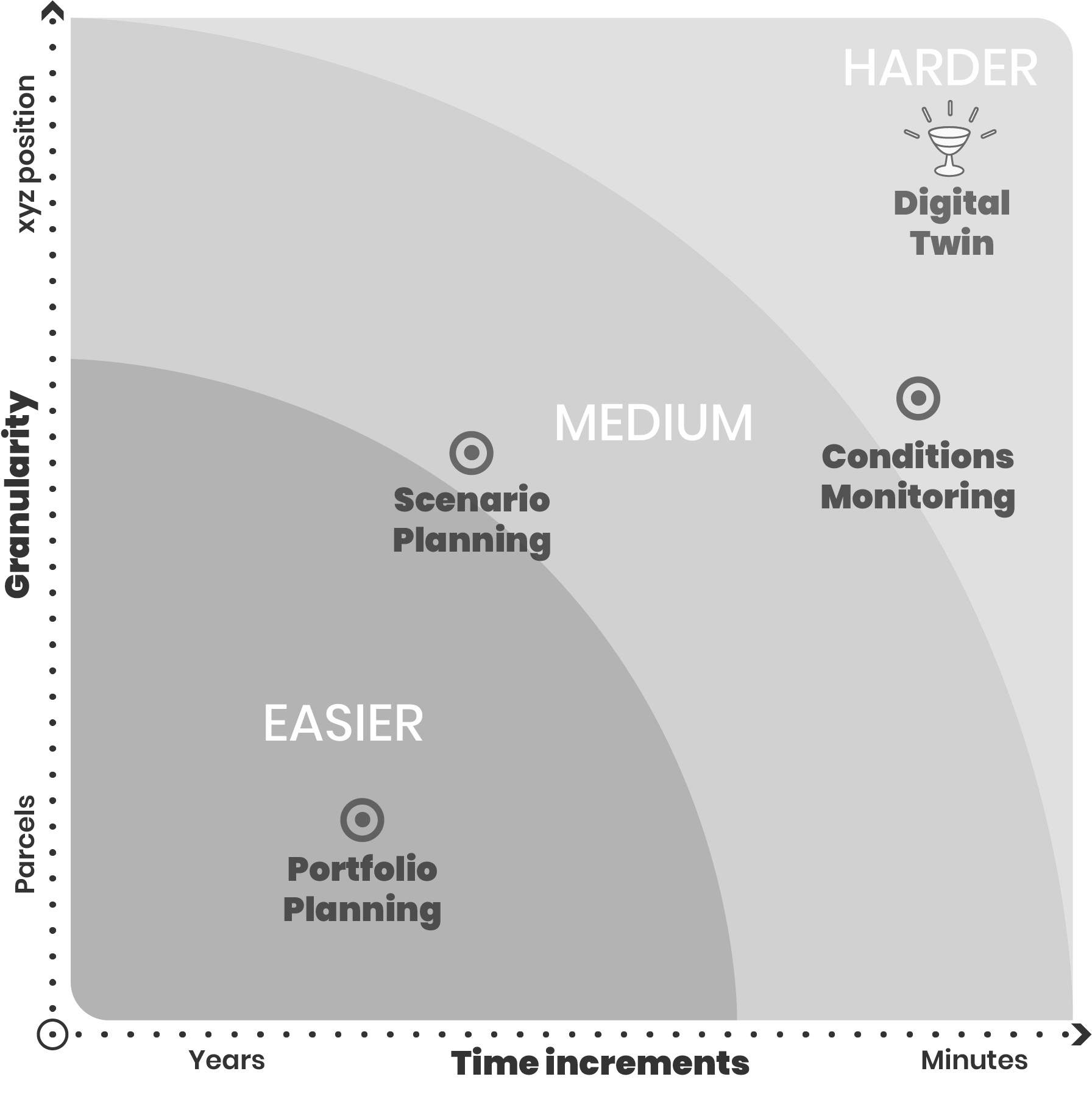

The opportunities to build new spatial interaction are ideally suited for a campus due to the close user relation and contained institutional nature of the campus setting. In many cases, users such as students or medical professionals spend more time on campus than at home. All the technologies explored herein have the potential to add new service layers, provide greater user customization and better efficiencies in the spatial environment, especially when working in concert. The digital twin is defined by its spatial granularity and real-timescale and is by far the holy grail of what might be achievable in the next few decades. By exploring those two spectra however, it is possible to find the easier opportunities in the campus domain. One of the first places to start is in portfolio and master planning, where it is possible to leverage historic data to analyze, understand and plan future investment and direction. As one moves closer to predictive models and greater specificity and spatial detail, intelligent scenario planning becomes a possibility. Real-time conditions monitoring of specific spaces and features such as utilization and user behaviors is much more difficult and will take time to implement. It is there that the true ROI will begin to show as models improve and a tight feedback loop between users and spaces can allow for new responsive spatial interactions.

The good news is that all of these technology opportunities continue to advance in sophistication and scale with each passing year, and the barriers for entry in purchasing, deploying and consuming data output are far less than they have ever been. Campuses are environments where there is constant investment and re-investment to support institutional functions. It is critically important for campuses to adopt digital awareness going forward in order to make the best decisions for infrastructure planning and beyond. Even the simplest steps to advance data harvesting or generate spatial user data can pay dividends in the future.